ANTHROPIC vs. THE PENTAGON

AI Governance Dispute, DoD Ultimatum & the Palantir Connection

DATE: February 26, 2026 | STATUS: DEVELOPING SITUATION | PRIORITY: HIGH

Classification: Unclassified // Open Source Analysis

EXECUTIVE SUMMARY

A high-stakes confrontation between the U.S. Department of Defense (DoD) and AI developer Anthropic has reached a critical juncture. Defense Secretary Pete Hegseth has issued a Friday, February 28, 2026 deadline for Anthropic to grant unrestricted lawful military use of its Claude AI model or face contract termination, potential designation as a supply chain risk, and possible invocation of the Defense Production Act. The dispute centers on Anthropic's firm refusal to remove guardrails prohibiting Claude's use in autonomous lethal targeting and mass domestic surveillance. Palantir Technologies — the intermediary through which Claude is deployed on classified DoD networks — sits at the center of this conflict. Source reporting indicates this is partly a power struggle between Anthropic leadership and the DoD, with Palantir as a central actor. The service disruptions to Claude Opus 4.6 observed on February 25, 2026 may be operationally connected to this dispute.

The Anthropic-DoD Relationship

Anthropic, founded in 2021 by former OpenAI executives, markets itself as a safety-focused "Public Benefit Corporation" committed to responsible AI development. In July 2025, the company was awarded a contract worth up to $200 million by the Pentagon — along with Google, OpenAI, and Elon Musk's xAI — to prototype frontier AI capabilities for U.S. national security.

Crucially, Anthropic became the first AI company to gain approval for use on classified U.S. military networks. This deployment is conducted through a partnership with Palantir Technologies, a data analytics firm with deep ties to the defense and intelligence community.

The Palantir Connection

Palantir Technologies functions as the conduit between Anthropic's Claude models and classified DoD systems. Under a previously established partnership, Claude is hosted on AWS GovCloud infrastructure and made accessible to intelligence and defense operations through Palantir's platform. This arrangement means that when the DoD uses Claude in classified settings, Palantir is the intermediary managing that access.

Source intelligence indicates the current dispute is, at its core, a power struggle between Anthropic's leadership and the DoD, with Palantir Technologies as a central factor in that dynamic. This aligns with reporting that after news broke of Claude's use in the Venezuela operation, Anthropic contacted Palantir directly to raise usage policy concerns — suggesting Anthropic views Palantir as the responsible party for how Claude is deployed in classified operations.

SOURCE INTELLIGENCE NOTE

A source with firsthand knowledge of the situation characterizes this as 'a power play between the owner of Claude AI and the DoD... all has to do with Palantir Technology.' This is consistent with open-source reporting confirming Palantir's role as the sole classified-network intermediary for Claude, and with Anthropic's decision to raise concerns directly with Palantir after the Venezuela operation disclosure.

The Venezuela Operation (January 3, 2026)

The immediate trigger for the current dispute appears to be the January 3, 2026 U.S. special forces operation in Caracas, Venezuela that resulted in the capture of President Nicolás Maduro. Reports from the Wall Street Journal and Axios, published February 13-14, 2026, revealed that Anthropic's Claude was used during this operation. Approximately 83 people were killed, including 47 Venezuelan soldiers, during the raid. It remains unclear precisely how Claude was employed — possibilities include drone coordination, image analysis, or communications summarization.

Following these disclosures, Anthropic reached out to Palantir to raise concerns about Claude's usage in the operation, referencing its hard limits on fully autonomous weapons and mass domestic surveillance. Anthropic's public statement framed this as a discussion of "specific Usage Policy questions."

Escalating Pentagon Demands (February 2026)

Throughout February 2026, the DoD escalated its demands. Pentagon leadership, including Undersecretary of Defense Emil Michael, held discussions with Anthropic that included hypothetical scenarios about AI use in missile defense and ICBM response situations. The Pentagon expressed concern that Anthropic's guardrails could create operational delays — for example, by requiring Anthropic's sign-off in time-critical defense scenarios. Anthropic called this characterization "patently false."

Critically, xAI's Grok system reached a deal with the Pentagon on February 23, 2026, agreeing to allow use for "any lawful purpose" — establishing a precedent and increasing pressure on Anthropic to comply.

The Hegseth Ultimatum (February 25, 2026)

On Tuesday, February 25, Defense Secretary Pete Hegseth met with Anthropic CEO Dario Amodei at the Pentagon. In what was described as a "cordial" but firm meeting, Hegseth issued an ultimatum: provide a signed agreement granting full lawful military use of Claude by 5:01 PM Friday, February 28, or face:

Contract termination — loss of all DoD business

Designation as a supply chain risk — a classification typically reserved for foreign adversaries

Invocation of the Defense Production Act — which would compel Anthropic to comply with Pentagon requirements regardless of company policy

On Wednesday evening, February 26, Pentagon officials transmitted what was described as their "best and final offer" to Anthropic ahead of the Friday deadline. Whether this offer materially changed the DoD's position is unknown. As of the time of this brief, Anthropic had not publicly responded.

ANTHROPIC'S POSITION

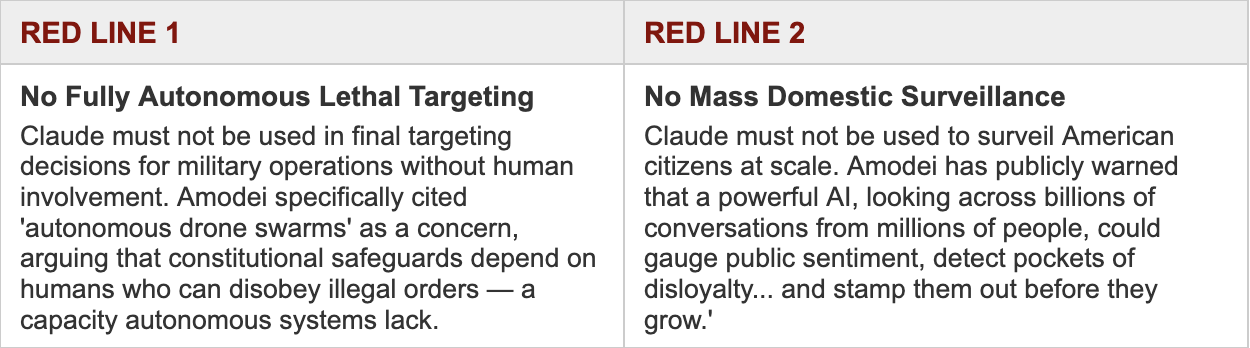

Anthropic CEO Dario Amodei refused to budge on two specific issues during the February 25 meeting:

Anthropic has indicated willingness on missile and cyber defense. The company confirmed in a statement that 'every iteration of our proposed contract language would enable our models to support missile defense and similar uses.' In December 2025 contract negotiations, Anthropic agreed to allow Claude for cyber and missile defense purposes — but the Pentagon rejected this as insufficient.

THE CLAUDE SYSTEM DISRUPTIONS — OPERATIONAL SIGNIFICANCE

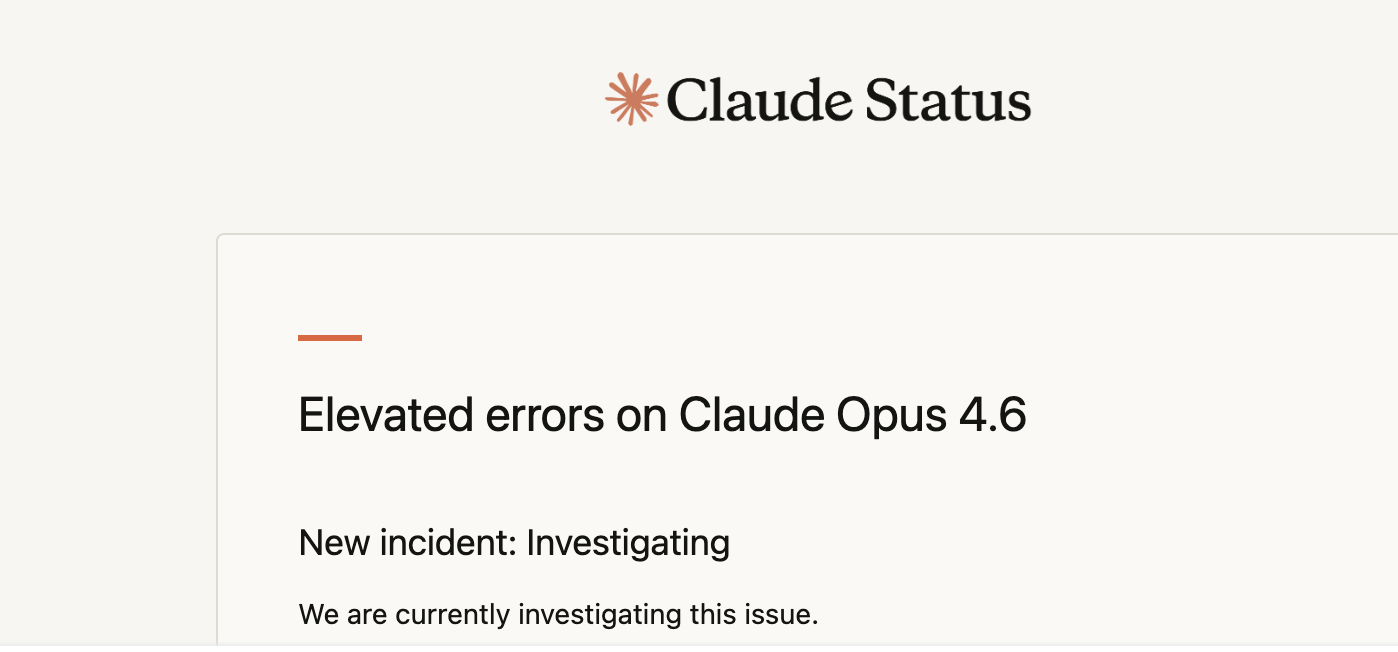

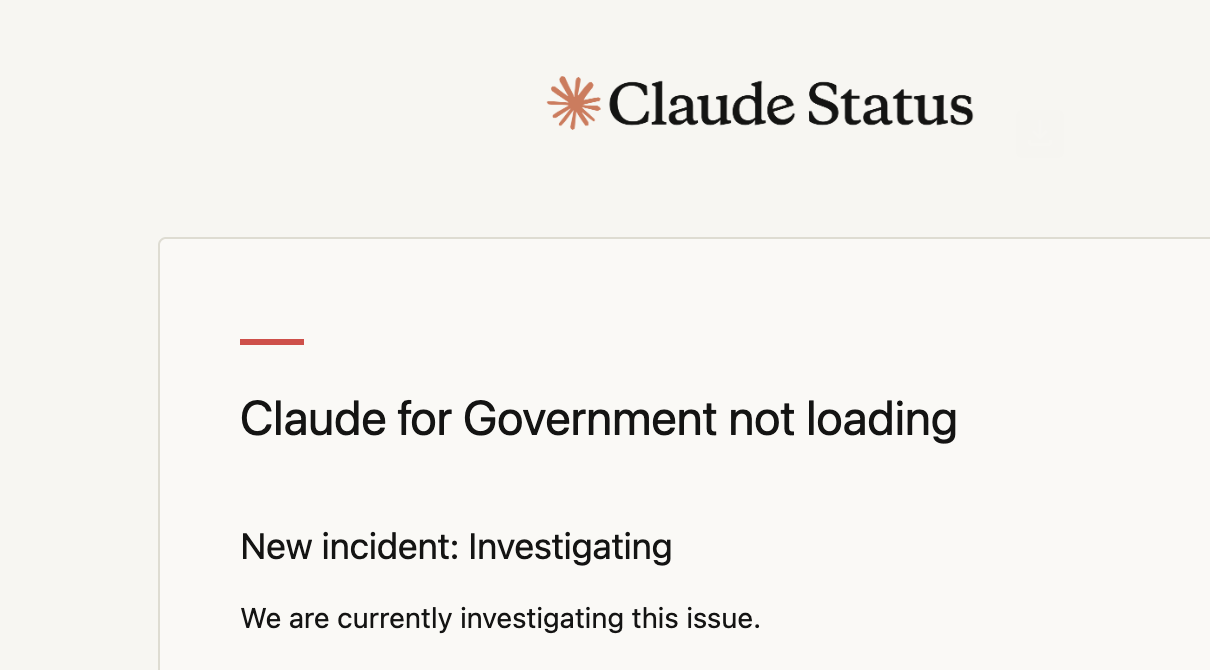

On February 25, 2026 — the same day as the Hegseth-Amodei ultimatum meeting — Claude's status page logged two simultaneous incidents:

INCIDENT 1: Elevated errors on Claude Opus 4.6 (New incident: Investigating)

INCIDENT 2: Claude for Government not loading (New incident: Investigating)

ANALYTICAL ASSESSMENT

The simultaneous occurrence of these outages on the same day as the high-stakes ultimatum meeting warrants attention. Claude Opus 4.6 is confirmed to be the primary model used by the DoD on classified networks through the Palantir partnership. The 'Claude for Government' outage is particularly notable — this is the platform through which federal agencies, including defense entities, access Claude. While technical outages can be coincidental, the timing raises the possibility that: (1) elevated DoD usage surrounding the dispute stressed systems, (2) Anthropic may have taken proactive steps to limit or audit government access, or (3) the dispute itself triggered backend system changes. This cannot be confirmed with currently available information, but the correlation should be tracked.

xAI Grok as a Pressure Lever

On February 23, 2026, Elon Musk's xAI reached a deal with the Pentagon agreeing to provide its Grok system for 'any lawful use' on classified networks — establishing the compliance standard the DoD is now demanding of Anthropic. This development materially strengthened the Pentagon's negotiating position, providing an immediate alternative and demonstrating that another frontier AI company was willing to meet DoD terms.

Financial & Political Dimensions

During Anthropic's $30 billion funding round in early 2026, the conservative-aligned venture capital firm 1789 Capital — whose partners include Donald Trump Jr. — declined to invest, explicitly citing Anthropic's advocacy for AI regulation. This signals the dispute has dimensions extending beyond contract terms into broader political and ideological terrain. The Trump administration has explicitly framed AI safety guardrails as "ideological constraints" and has committed to what Defense Secretary Hegseth called "military AI dominance."

The Regulatory & Governance Stakes

The NYU Stern Center for Business and Human Rights assessed this dispute as 'a defining test of whether responsible AI deployment is genuinely possible — or merely aspirational — in an era of military AI competition.' If the U.S. government responds to principled corporate limits by labeling the company a supply chain risk, it sends a systemic signal to the entire AI industry: maintaining ethical guardrails is a business liability. This outcome could reshape how all AI companies approach defense contracting.

TIMELINE OF KEY EVENTS

Jul 2025 - Pentagon awards up to $200M contracts to Anthropic, Google, OpenAI, and xAI for national security AI prototyping.

Dec 2025 - Contract negotiations: Anthropic agrees to allow Claude for cyber and missile defense. Pentagon rejects this as insufficient.

Jan 3, 2026 - U.S. special forces raid Caracas; Maduro captured. Claude reportedly used in the operation. 83 deaths reported.

Jan 9, 2026 - Hegseth memo announces "AI-first warfighting force" strategy, calling for AI use free from "usage policy constraints."

Feb 13-14, 2026 - WSJ and Axios report Claude's use in Venezuela operation. Anthropic contacts Palantir to discuss usage policy concerns.

Feb 15, 2026 - Pentagon begins considering designating Anthropic a supply chain risk. An Anthropic safety researcher publicly resigns over AI concerns.

Feb 23, 2026 - xAI (Grok) reaches deal with Pentagon agreeing to 'any lawful use' on classified networks — establishing competitive precedent.

Feb 25, 2026 - Hegseth meets Amodei at Pentagon; issues 5:01 PM Friday deadline. Claude Opus 4.6 outages and 'Claude for Government' outages occur simultaneously.

Feb 26, 2026 - Pentagon transmits 'best and final offer' to Anthropic. Deadline status: PENDING.

Feb 28, 2026 - DEADLINE: Anthropic must comply or face contract loss, supply chain risk designation, and potential Defense Production Act invocation.

Source List

Jennifer Jacobs | Feb 26, 2026

NBC News — Anthropic says U.S. military can use its AI systems for missile defense

Jared Perlo & Gordon Lubold | Feb 25, 2026

ABC News — Pentagon-Anthropic ultimatum over AI technology

ABC News Politics | Feb 25, 2026

Al Jazeera — Anthropic vs the Pentagon: Why AI firm is taking on Trump administration

Sarah Shamim | Feb 25, 2026

NYU Stern BHR — The Cost of Conscience: What the Anthropic-Pentagon Feud Means for AI Governance

Luke Barnes | Feb 19, 2026

Wall Street Journal — Pentagon Used Anthropic's Claude in Maduro Venezuela Raid

WSJ National Security | Feb 13, 2026

Axios — Anthropic-Claude Maduro Raid Pentagon

Axios | Feb 13–15, 2026

Palantir Investor Relations — Anthropic and Palantir Partner to Bring Claude AI Models to AWS

Palantir Press Release | 2024

Anthropic — Anthropic and the Department of Defense to Advance Responsible AI in Defense Operations

Anthropic Press Release | Jul 2025

Claude Status Page — Elevated errors on Claude Opus 4.6 (Feb 25, 2026)

status.anthropic.com | Feb 25, 2026

Claude Status Page — Claude for Government not loading (Feb 25, 2026)

status.anthropic.com | Feb 25, 2026

HUMAN INTELLIGENCE: A source with firsthand knowledge describes this as a power play between Anthropic ownership and the DoD, with Palantir Technologies as a central factor.